|

|

|

|

|

|

|

|

|

|

|

Submitted by srchvrs on Thu, 07/01/2021 - 11:48

UPDATE: Not all of what I wrote aged well so certain corrections are required. It was a right observation (described below) that in a zero-shot mode when a model creates code directly from an arbitrary prompt (i.e., it is not shown several text-to-code translation examples), GPT-3 (or similar models) that were not systematically trained on code performed rather poorly. However, a subsequent work by Open AI: Evaluating Large Language Models trained on Code" showed that if a model was pre-trained on lot of publicly available code then it acquired non-trivial coding capabilities often producing correct (or near correct) solutions purely from natural language. A more up-to-date perspective is in my more recent tweet.

Automation of software development is as old as the software development itself. Remember that we started writing programs directly in machine codes (and I personally actually have experience programming such a device). Then, a big productivity boost was achieved by writing assembly programs, which shortly followed by introduction of higher-level languages such as FORTRAN. The progress clearly has not stopped at that point and we see a great deal of improvement coming from better programming languages, libraries, and tooling. In the machine learning world, people started by writing their own CUDA kernels, but now we have dynamic tensor frameworks (such as Pytorch), where the neural computation graph is defined (and modified) by a Python program. These modifications can happen dynamically, i.e., during training or inference. There are a lot of smart ways in which the progress can continue in the future. But I think that a fully automated code generation from a natural language is not one of them.

What are the problems with automatic code generation? One big issue is that there is no reliable technology to understand and interpret the natural language. My strong belief is that sequence-to-sequence like neural networks or language-modeling approaches do not learn much beyond a probability distribution. These technologies are ground-breaking and there is nothing short of amazing that we can have a close-to-human-quality machine translation. However, these models do make stupid mistakes here and there. Humans do them too, but the output of a machine translation system is easy to post-edit and the scale of errors can be pretty small. What makes it possible is that the machine translation is (loosely speaking) a content-preserving process, which has a high degree of fidelity in terms of lexical, syntactic and semantic similarity between the source and the target. It also has a high degree of locality: A sentence-level translation, which does not take the document context into account, can sometimes be quite accurate. This is one of the reasons why the machine translation was possibly the first successful AI application, which was shown to work in a very narrow domain more than 50 years ago.

Many people think that post-editing of the GPT3 output would work as well as post editing of the machine translation output. However, software development is far from this idealistic view. Software is being created as a fusion of a developer's knowledge and common sense, user requirements, colleague advice and feedback. There is no well-defined source and no well-defined target. There is also a complicated feedback loop coming from debugging and testing. It is simply impossible to express these requirements through a reasonably small set of examples. Furthermore, writing out such examples is time consuming and error prone (there is no precise semantics in example-based programming). It is even more time consuming to fix the mistakes generators make.

I also strongly believe that the current AI systems can greatly help to improve the autocomplete functionality, but pushing the current tech to the level of a "pilot"-like automation is likely impossible. Unfortunately, much of the discussion on the topic is influenced by early testers whose positive feedback has been magnified 1000x while all the negative feedback has been largely muffled. Fortunately, the

EleutherAI and HuggingFace teams made a GPT-neo demo available to everyone and we can now poke it. We are interested in the SQL generation, which is the only code-generation option.

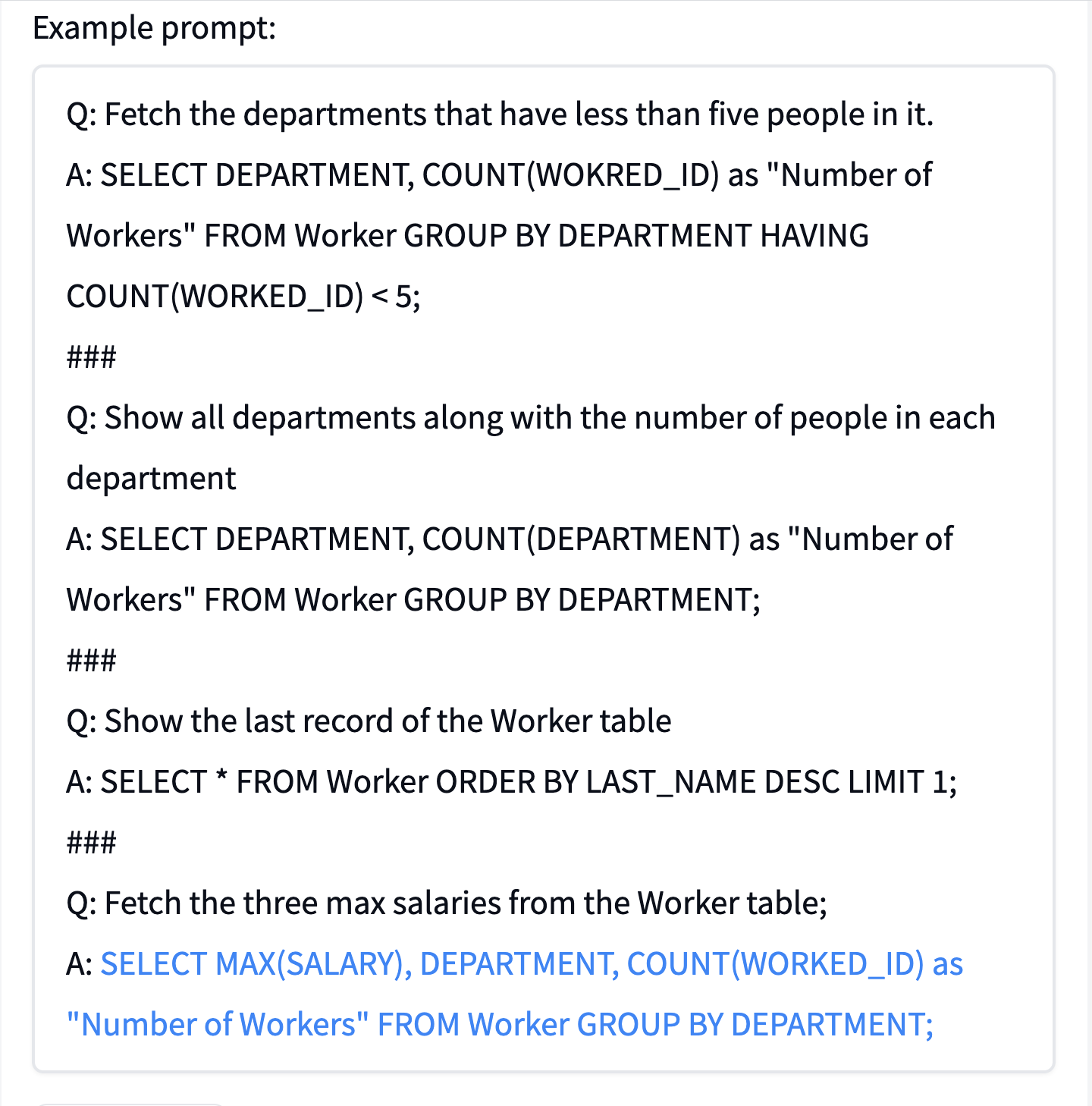

First, one can notice that the process is not deterministic: Each time you click "generate" the system may generate a unique answer. The original HuggingFace example asks to "Fetch the three max salaries from the Worker table". I clicked multiple times, but all of the answers seem to be incorrect. Sometimes, they are quite far from a correct one, check out, e.g., this example:

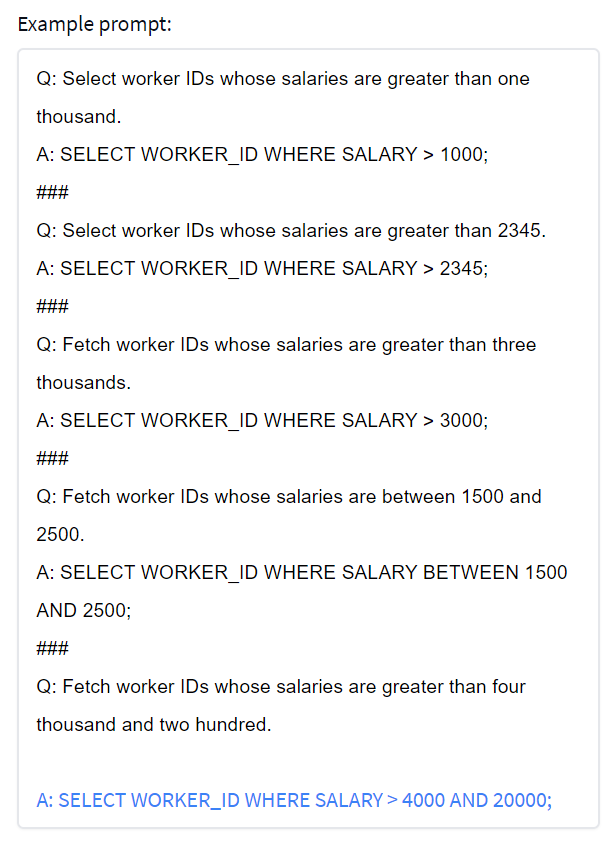

I also tried to replace the word "fetch" with the word "select" in the prompt, but the results were still disastrous. One may argue that EleutherAI/HuggingFace came up with the prompt that was too complicated. Thus, I tried a simpler version, which had more consistent prompts:

Q: Select worker IDs whose salaries are greater than one thousand.

A: SELECT WORKER_ID WHERE SALARY > 1000;

###

Q: Select worker IDs whose salaries are greater than 2345.

A: SELECT WORKER_ID WHERE SALARY > 2345;

###

Q: Fetch worker IDs whose salaries are greater than three thousands.

A: SELECT WORKER_ID WHERE SALARY > 3000;

###

Q: Fetch worker IDs whose salaries are between 1500 and 2500.

A: SELECT WORKER_ID WHERE SALARY BETWEEN 1500 AND 2500;

###

Q: Fetch worker IDs whose salaries are greater than 4321.

A:

This prompt consistently generates a correct answer. However, the system still breaks easily, e.g., when I ask to retrieve salaries greater than four thousand and two hundred:

In conclusion, there is little doubt that language-to-code generation works in some toy cases, but in most other cases, it does not. I suspect that the technology is not there yet: It is completely unclear how the current predictive tech, which Metzler et al called dilettante, can be taken close to the level of accuracy of modern compilers, which is necessary, in fact, to be useful. Of course, the GPT-3 trained by Microsoft and OpenAI is better than the EleutherAI/HuggingFace GPT3, in particular, because it was trained in a semi-supervised fashion. Yet, its generation algorithm comes without any accuracy guarantees, which makes the model dangerous. In any case, I am looking forward to testing it, but Microsoft/OpenAI coding "co-pilot" is not open to the general public yet (there is a wait list).

Submitted by srchvrs on Mon, 04/19/2021 - 10:42

I just finished listening to the book "AI Superpowers: China, Silicon Valley, and the new World Order. It was written by a leading scientist and technologist Kai-Fu Lee, who under the supervision of the Turing Award winner Raj Reddy created one of the first continuous speech recognition systems. He then held executive positions at several corporations including Microsoft and Google. I largely agree with Kai-Fu Lee assessment of China's potential, but it is hard to agree with his assessment of AI. The book was written at the peak of deep learning hype and it completely ignores shortcomings of deep learning such as poor performance on long tail samples, adversarial samples, or samples coming from a different distribution: It is not clear why these important issue is omitted. As we realise now, a "super-human" performance on datasets like Librispeech or Imagenet does show how much progress we have made, but it does not directly translate into viable products. For example, it is not hard to see that current dictation systems are often barely usable and the speech recognition output often requires quite a bit of post-editing.

Given the overly optimistic assessment of deep learning capabilities, it is somewhat unsurprising Kai-Fu Lee suggests that once AI is better than humans we should turn into a society of compassionate caregivers and/or social workers. I agree that a large part of the population could fill these roles, which are important and should be well paid! But I personally dream about a society of technologists, where at least 20-50% of the population are scientists, engineers, and tinkerers who have intellectually demanding (or creative) jobs. Some say it would be impossible, but we do not really know. A few centuries ago, only a small fraction of the population were literate: Now nearly everybody can read and write. Very likely our education system has huge flaws starting from pre-school and ending up at the PhD level, which works as a high-precision but low-recall sieve that selects the most curious, talented, and hardworking mostly from a small pool of privileged people. I speculate we can do much better than this. In all fairness, Kai-Fu Lee does note that AI may take much longer to deploy. However, my impression is that he does not consider this idea in all seriousness. I would reiterate that the discussion about the difficulties of applying existing tech to real world problems is nearly completely missing.

Although it is a subject of hot debates and scientific scrutiny alike, I think the current AI systems are exploiting conditional probabilities rather than doing actual reasoning. Therefore, they perform poorly on long tail and adversarial samples or samples coming from a different distribution. They cannot explain and, most importantly, reconsider their decisions in the presence of extra evidence (like smart and open-minded humans do). Kai-Fu Lee on multiple occasions praises an ability of deep learning systems to capture non-obvious correlations. However, in many cases these correlations are spurious and are present only in the training set.

On the positive side, Kai-Fu Lee seems to care a lot about humans whose jobs are displaced by AI. However, as I mentioned before, he focuses primarily on the apocalyptic scenario where machines are rapidly taking over the jobs. Thus, he casually discusses an automation of a profession as tricky as software engineering, whereas in reality it is difficult to fully replace even truckers (despite more than 30 years of research on autonomous driving). More realistically, we are moving towards a society of computer-augmented humans, where computers perform routine tasks and humans set higher-level goals and control their execution. We have been augmented by (first simple) and now by very sophisticated tools for hundreds of thousands years already, but the augmentation process has accelerated recently. It is, however, still very difficult for computers to consume (on their own) raw and unstructured information and convert it into the format that simple algorithms can handle. For example, a lot of mathematics may be automatable once a proper formalization is done, but formalization seems to be a much more difficult process compared to finding correlations in data.

In conclusion, there are a lot of Kai-Fu Lee statements that are impossible to disagree with. Most importantly, China is rapidly becoming a scientific (and AI) powerhouse. In that, there has been a lot of complacency in the US (and other Western countries) with respect to this change. Not only is there little progress in improving basic school education and increasing the spending on fundamental sciences, but the competitiveness of US companies has been adversely affected by regressive immigration policies (especially during the Trump presidency). True that the West is still leading, but China is catching up quickly. This is especially worrisome given a recent history of bullying neighboring states. The next Sputnik moment is coming and we better be prepared.

Submitted by srchvrs on Thu, 04/15/2021 - 13:17

Due to high annotation costs making the best use of existing human-created training data is an important research direction. We, therefore, carried out a systematic evaluation of transferability of BERT-based neural ranking models across five English datasets. Previous studies focused primarily on zero-shot and few-shot transfer from a large dataset to a dataset with a small number of queries. In contrast, each of our collections has a substantial number of queries, which enables a full-shot evaluation mode and improves reliability of our results. Furthermore, since source datasets licences often prohibit commercial use, we compare transfer learning to training on pseudo-labels generated by a BM25 scorer. We find that training on pseudo-labels—possibly with subsequent fine-tuning using a modest number of annotated queries—can (sometimes) produce a competitive or better model compared to transfer learning. I am quite happy our study is accepted for presentation at SIGIR 2021.

We have tried to answer several research questions related to the usefulness of transfer learning and pseudo-labeling in the small and big data regime. It was quite interesting to verify the pseudo-labeling results of a now well-known paper Dehghani, Zamani, and friends "Neural ranking models with weak supervision," where they showed that training a student neural network using BM25 as a teacher model allows one to greatly outperform BM25. Dehghani et al. trained a pre-BERT neural model using an insane amount of computation. However, we thought a BERT-based model, which is already massively pre-trained, could be fine-tuned more effectively. And, indeed, on all the collections we were able to outperform BM25 in just a few hours. However, the gains were rather modest: 5-15%.

In that, we find that transfer-learning has a mixed success, which is not totally unsurprising due to a potential distribution shift: Pseudo-labeling, in contrast, uses only in-domain text data. Even though transfer learning and/or pseudo-labeling can be both effective, it is natural to try improving the model using a small number of available in-domain queries. However, this is not always possible due to a "A Little Bit Is Worse Than None" phenomenon, where training on small amounts of in-domain data degrades performance. Previously it was observed on Robust04, but we confirm it can happen elsewhere as well. Clearly, future work should focus on fixing this issue.

We also observe that beating BM25 sometimes requires quite a few queries. Some other groups obtained better results in training/fine-tuning a BERT-based model using a few queries from scratch (without using a transferred model). One reason why this might be the case is that our collections have rather shallow pools of judged queries (compared to TREC collections): MS MARCO has about one positive example per query and other collections have three-four. Possibly, few-shot training can be improved with a target corpus pre-training. We have found, though, that target corpus pre-training is only marginally useful in the full-data regime. Thus we have not used it in the few-data regime. In retrospect, this could have made a difference and we need to consider this option in the future, especially, IR-specific pre-training approaches such as PROP. Finally, it was also suggested to compare fine-tuning BERT with fine-tuning a sequence-to-sequence model as the latter may train more effectively in the small-data regime.

Submitted by srchvrs on Thu, 03/04/2021 - 21:52

We studied the utility of the lexical translation model (IBM Model 1) for English text retrieval, in particular, its neural variants that are trained end-to-end. I am quite happy that our study is going to be presented at ECIR 2021. Using traditional and/or neural Model 1 we produced best neural and non-neural runs on the MS MARCO document ranking leaderboard in late 2020. Also, at the moment of writing this blog post, our BERT-Model1 submission holds the second place. Besides leaderboarding, we have made several interesting findings related to efficiency, effectiveness, and interpretability, which we describe below. Of course, getting strong results requires more than a good architecture, but we find it interesting that some of the top submissions can be achieved using a partially interpretable model.

First of all, given enough training data the traditional, i.e., non-neural, IBM Model 1 can sufficiently boost performance of a retrieval system: Using the traditional Model 1, we produced the best traditional run on the MS MARCO leaderboard in 2020/12/06. However, the non-neural Model 1 does not work very well when queries are much shorter than respective relevant documents. We suspect this was the main reason why this model was not used much by the retrieval community in the past.

We can, nevertheless, come up with an effective neural parametrization of this traditional model, which leads to a substantial improvement on MS MARCO data (for both passage and document retrieval). Furthermore, the resulting context-free neural Model 1 can be pruned: As a result we get a sparse matrix of conditional probabilities. Sparsification does not decrease accuracy, but the sparsified model can run on CPU thousands of times faster compared to a BERT-based ranker. This model can improve performance of the candidate-generation stage without expensive index-time precomputation and query-time manipulation with large tensors. We are not aware of any other neural re-ranking model that has this nice property.

A neural Model 1 can also be used as an aggregator layer on top of contextualized embeddings produced by BERT. This layer is quite interpretable: BERT-Model1 generates a single similarity score for every pair of a query and a document token, which can be interpreted as a conditional translation probability. Then these scores are combined using a standard product-of-sum formula:

$$

P(Q|D)=\prod\limits_{q \in Q} \sum\limits_{d \in D} T(q|d) P(d|D),

$$

where $Q$ is a query and $q$ is a query token. $D$ is a document and $d$ is a document token. Although more studies are needed to verify this hypothesis: Yet, we think having an interpretable layer can be useful for model debugging. In any case, this layer has a better interpretability compared to prior work, which uses a kernel-based formula by Xiong et al. to compute soft-match counts over contextualized embeddings. Because each pair of query-document tokens produces several soft-match values corresponding to different thresholds, it is problematic to aggregate these values in an explainable way.

In conclusion, we note that this partial interpretability comes virtually for free. It does not degrade efficiency or accuracy. In fact, BERT-Model 1 has slightly better accuracy compared to a vanilla BERT (monoBERT) that makes predictions on truncated documents. This small accuracy gain, however, was likely key to obtaining strong results on MS MARCO.

Submitted by srchvrs on Wed, 03/03/2021 - 21:02

It was both sad and enlightening to (virtually) attend a memorial honoring the former Language Technologies Institute director and founder Jaime Carbonell, who untimely passed away one year ago. Jaime started working on NLP and machine translation when very few people believed these tasks were doable. It was a risky move, which required a lot of courage, foresight, energy, not to mention scientific, and organizational talent. He had it all and he had a huge impact on the field as a scientist, advisor, and a leader of an influential language-research institution. I had very few personal interactions with Jaime, but my life was greatly impacted by the institute that Jaime created and that ventured to take an old dude like myself aboard. I hope we, all the former and current students, can become technology and thought leaders Jaime wanted us to be.

Pages

|

|

|

|

|

|

|

|

|

|

|